One of the most exciting developments in recent years is the concept of virtualization. Once thought of as a tool for the large enterprise, recent developments have made the possibility of running multiple computers on one physical host affordable and attractive to the SMB market.

First, a history lesson…..

The concept of virtualization began back in the 1960s, when IBM started working with the concept of running multiple processes on the same equipment. Powerhouse machines easily cost hundreds of thousands, if not millions, of dollars, and an idle computer could be extremely costly to maintain with no benefit. To get the biggest bang for their buck, IBM engineers found a way for a computer to do multiple jobs at once; a mainframe computer could calculate a budget and collate a customer list at the same time, and the processor would have a much shorter downtime. Many of these concepts were incorporated into new chip designs, but largely the concept changed into the concept of “multitasking”, which we use to this day. (AT+T advertises that on the iPhone, you can surf the Internet and talk on the phone at the same time, but I haven’t found much of a use for it yet.) The concept of virtualizing a computer largely faded in the 1980s and 1990s, as computers became more affordable, and we didn’t worry as much about system idle time (not downtime, where your IT guy runs around like his hair is on fire).

Fast forward to today – the year 2010 (or is that the temperature here inTexas?), when we are once again trying to maximize our computer investment. Computers are still a cheaper resource compared to their distant IBM ancestors, but they are more critical than ever. In 1965, if your computer crashed, you called the repair team, and your employees moved on with their work – it was mostly manual anyway. In 2010, if your computer crashes, that team member comes to a screeching halt – if the server crashes, your whole business comes to a screeching halt, and the costs start adding up.

In addition (and I’ve seen this with more clients than I’d care to admit), growing business got into the issue of “server creep” – they outgrew a server, or needed one for a new initiative, and simply bought another one. Before they realize it, they are supporting six or seven individual servers, on different hardware, all draining the same amount of power, and slowly increasing the power demands on the business. The electric bill goes up, the heat from the additional servers go up, and before you know it, money is being thrown at electrical upgrade, air conditioning, and space. A short-term decision suddenly became a costly maintenance item. Take a large company like HP, Coca Cola, or even the US government, and the costs start increasing into the millions.

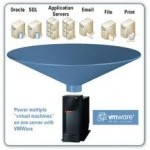

Then came virtualization, a way for one powerful machine to act as many smaller machines. Large companies could shrink down their farm of servers to a few boxes, and run faster, using every last bit of processing power available to them. In large environments, the cost savings was immediate, and easily justified the big dollars paid to the virtualization companies to take advantage of this new technology.

However, the smaller companies didn’t have as big of a benefit, and many wrote it off altogether.

Until VMWare came along, and turned the industry on its head. Again. But I’m getting ahead of myself.

Let’s look at the benefits and risks of a VM environment, in general:

- Maintenance cost – Maintaining a server is a costly exercise. Taking out the usual maintenance (updates, service packs, log reviews, etc.), the cost of electric for a typical server is about $520/year, and puts off about 1700 BTU/hr in heat. In an environment with six servers, for instance, the cost is $3120/year, and averages about 10,200 BTU/hr in heat, which you need to keep chilled. Take that same environment, put in a highly reliable and beefy server, and you can reduce the costs to $2180/year for electric, and only 7,160 BTU/hr to keep chilled – your AC isn’t working as hard, and you will get more life out of it. These are very rough numbers for examples; every situation is different, and they are actually on the low side of the potential savings.

- Management cost – Servers need to be maintained. Routine servers can be accessed through an SSL, RDP, VNC, or other connection for maintenance, but what if you need to restart the server? Before I got involved in virtualization, if we had a server crash, I would need to drive two hours to our colocation facility, research and fix the issue, and drive two hours back – I lost four hours each time the server crashed. In a virtual world, that 4-hour round-trip affair is gone – as long as I can get into the physical host (the beefy server running my virtual machines), I can restart the servers easily, and usually faster than the old-fashioned hardware reset.

- Decreased downtime – I addressed the major issue with downtime above, but here’s another example – let’s say my server got a virus (happens to the best of us, sorry), and crashed my system. On a traditional system, I would need to pull backups and restore from the tape archives, a dicey and time-intensive process; if it doesn’t work, I need to reinstall the server from scratch. In the virtual world, I can fall back to a previous “snapshot” of my server, and have it up and running in minutes. If, for some reason, the snapshot fails, I can reinstall prepared images of the server to cut down on the rebuild time – instead of staring at a computer for an hour waiting for the software to reload, I can have my server back up and functional with a few clicks.

- Conservation of resources – When virtualization was first introduced, the concept was to maximize the use of the processors. As I gained more servers, I had more servers with idle time – some servers would be at full load, and some would be at 10% load if I was lucky (especially with Web servers). With a beefy server, my processer will be utilized more, and if Server A uses more than I planned, a few button clicks can give that server another processor, more memory, or whatever it needs.

- N-level flexibility – Higher-end servers get better parts, just like an Infiniti will have better parts than a Hyundai (was thinking Yugo, but I didn’t want to date myself). More parts means more options. Need a dedicated Ethernet port for a server? No problem, the big server has four, and only one of them is being used right now. Need more memory/processors? Just click here. Need to configure three machines on a VLAN, and the fourth in a DMZ? Use this wizard. The possibilities are only bound by the server, but this is a much better server than you’ve used in the past.

- Ease of transfer – Congratulations, you’ve used your server for three years, and Bob Johnson just got the biggest account of his life, so your business just doubled overnight. Under the old system, you would have to add more servers (more cost, more heat, etc). Under virtualization, you can over-spec your VMs (not really recommended, but a short-term band-aid), and when your new server comes in, just move the files from one server to the next. Nothing has to change except when you want it to change.

- Sandbox – My kids have spent hours in the sandbox, or on the beach, playing with new ideas on castle construction and sand-shovel irrigation projects. When they’re done, Mother Nature erases their projects, and makes it ready for the next little dreamer. VM sandboxes are the same – you can test out the new server, toy, etc., in an isolated mode, and do virtually anything you want – when you’re done, click Delete, and the VM is gone. Much better than hoping the old desktop in the back of the broom closet can run Exchange 2010 before you have to install it live for the entire company.

And now, the risks:

- Costs – High-end servers are pricey. Just be sure to figure the ROI over the length of the usable life (no more than four years – I prefer three).

- Single point of failure – If a virtual server dies, a few clicks restarts it. If your physical host dies, you’re dead in the water. Large companies have failover configurations to prevent this. We recommend a failover configuration, but realize sometimes it’s just not feasible. Just be sure to get the warranty coverage – Dell offers some great coverage on their servers (for the record, I’m not a Dell fan, but I have to recognize excellence when I see it).

- UPS (Uninterruptable Power Supply) – Maybe you held off on getting the backup battery. Don’t even think about it with a virtual server. While the system is very resilient, the VMs themselves are nothing more than files, and you want to be sure they are shut down properly.

- Management tools – Do a search on the Internet, and you will find a virtual cornucopia (I bet a friend of mine I could use that word in conversation) of information and trialware of programs to manage your network; some are free, most cost money. Be careful when looking at these programs – many of them are bloatware that you will never use, and mimic some of the tasks you can do for free.

There are some tools to get you started on your way to the world of virtualization, but I would recommend discussing your needs with a computer professional before signing that big check. You can also start on your way with virtualization now with some of your old equipment, to get you familiar with the benefits and caveats of a virtual environment.